AWS

Ascend Enterprise can be deployed into customer's AWS account. Please see below on what AWS resources Ascend Enterprise requires, how to get Ascend Enterprise deployed, and maintenance and access expectations.

Required Resources

**Ascend Enterprise (AWS) uses the following AWS services:

- EKS

- CloudWatch

- CloudTrail

- EC2

- Auto-scaling Groups

- ELB (Elastic Load Balancer)

- IAM

- KMS (Key Management Service)

- RDS

- S3

Deployment Preparation

This section describes the 4 steps to prepare a dedicated AWS account for the Ascend Enterprise deployment.

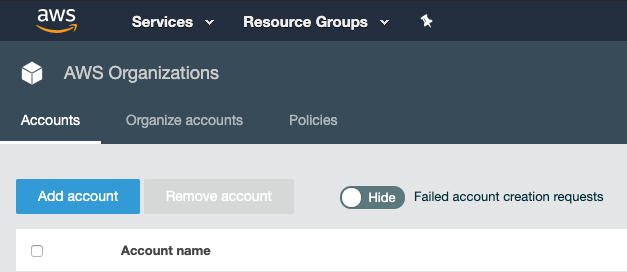

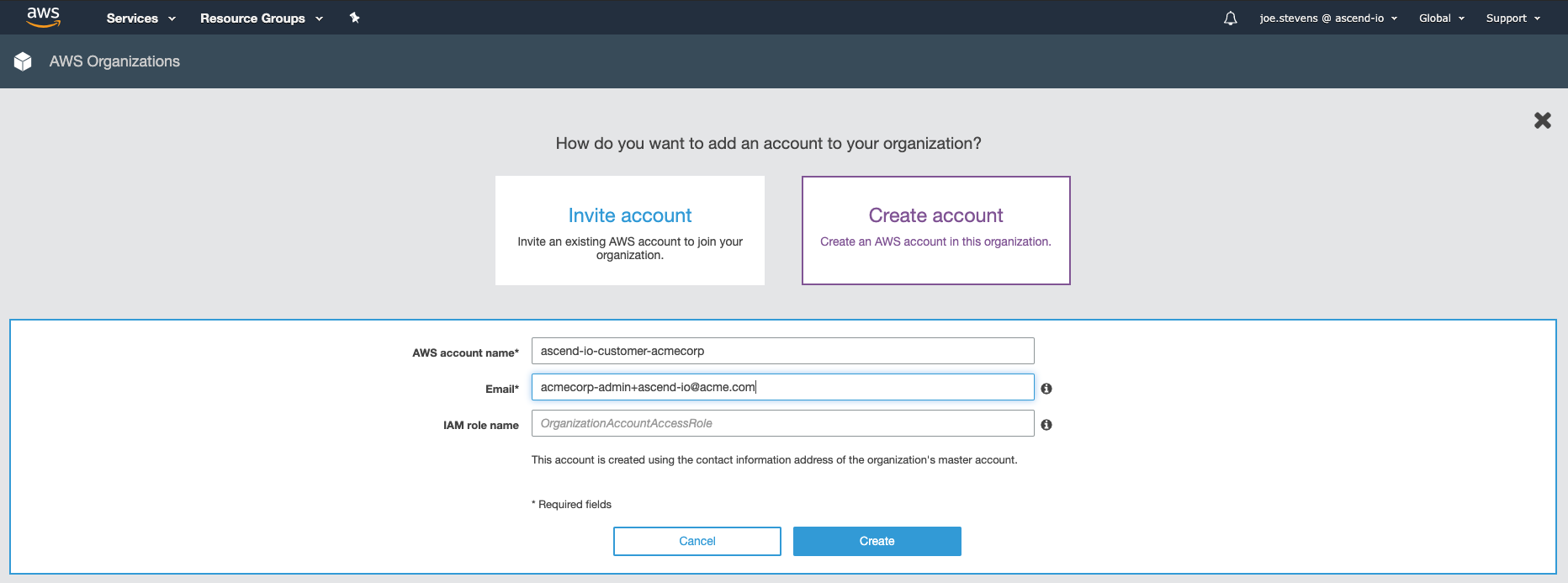

Step 1: Create Dedicated AWS Account

Please create a separate AWS account within your organization (see AWS documentation here).

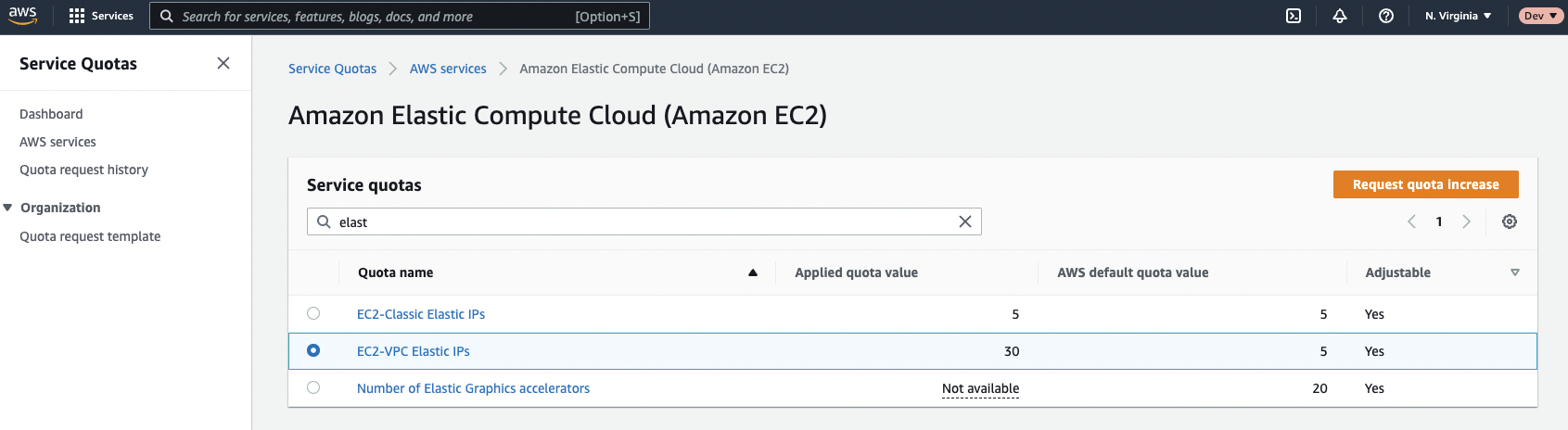

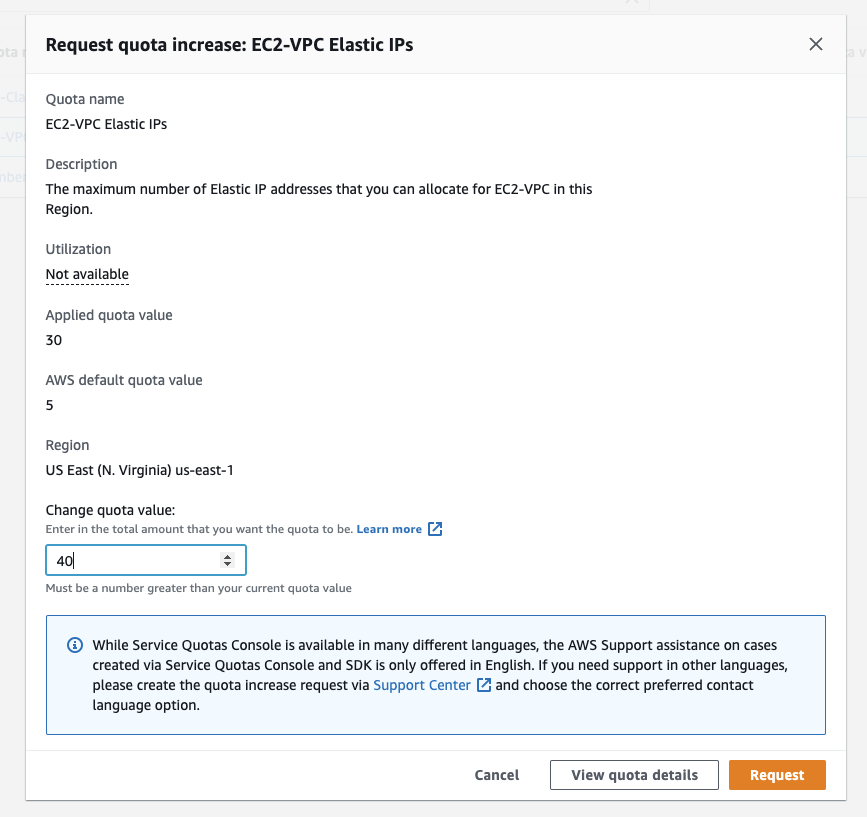

Step 2: Request Resource Limit Increases

With the account you've created in step 1, please request an increase in EC2-VPC Elastic IPs here. The new quota must be at least 8 IPs. Typically these requests are approved in a few minutes.

Step 3: Grant Ascend Access

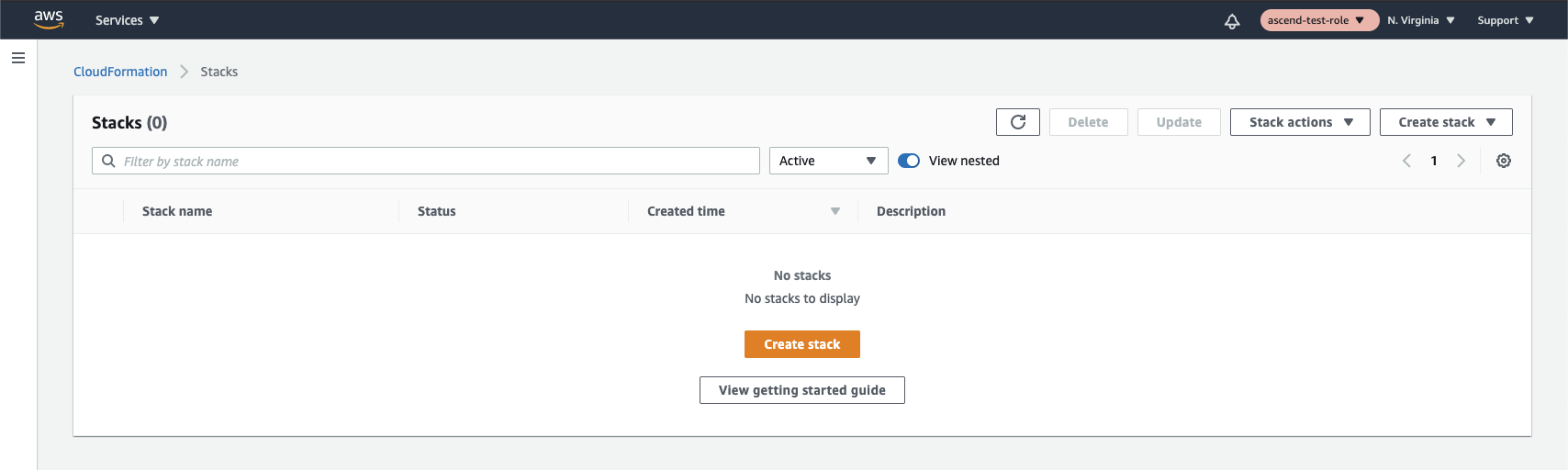

- Navigate to the CloudFormation service and create a new stack:

Choose the Correct RegionMake sure that you are creating the CloudFormation stack in the same region as your desired Ascend deployment region, otherwise the access will not work correctly (Ascend's access with this template is scoped to the region where it's deployed).

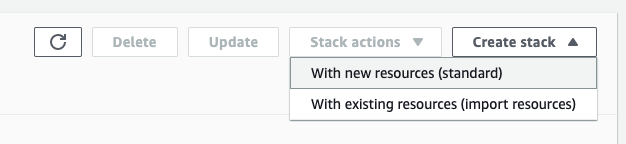

Make sure to select "With new resources (standard)" when creating the stack.

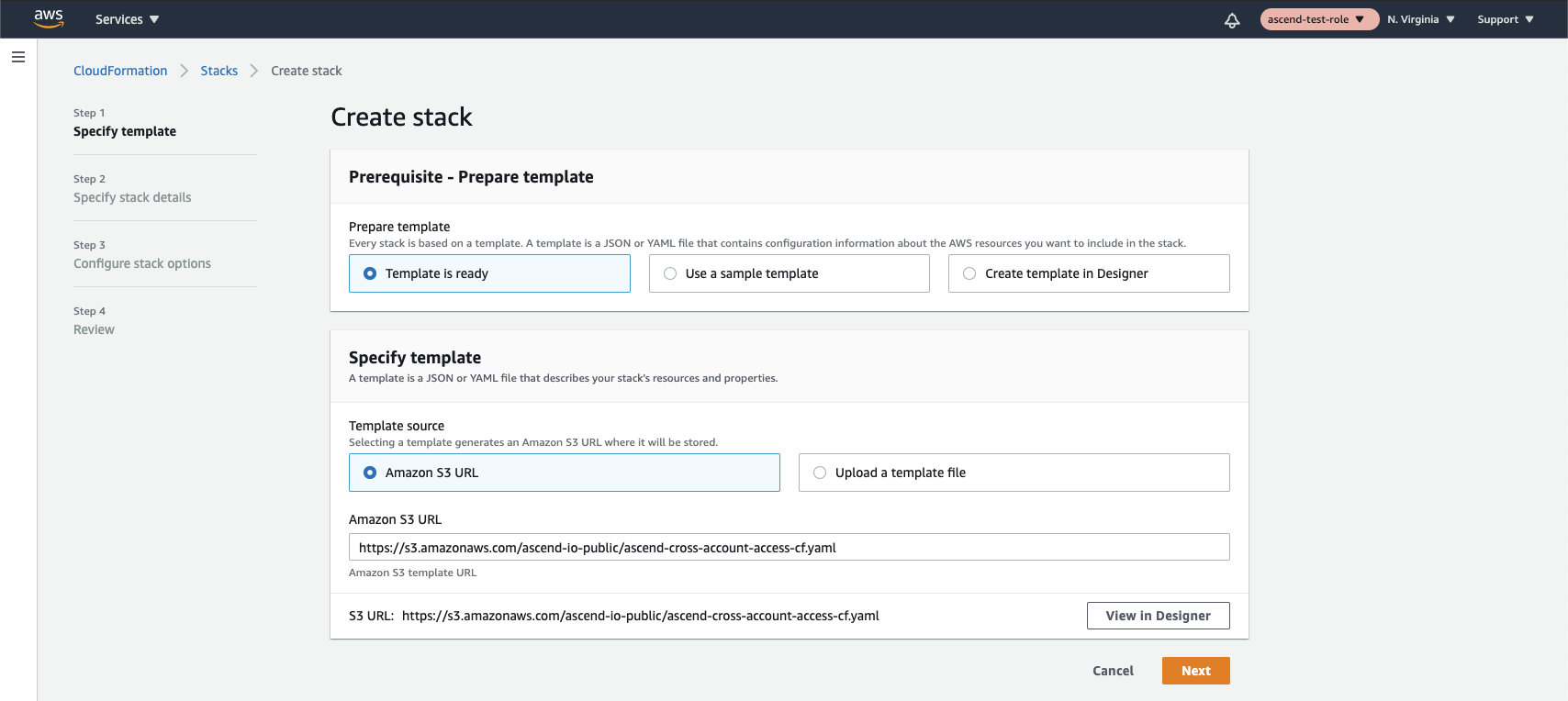

- Select "Template is ready", and choose "Amazon S3 URL" as the source. Then input

https://s3.amazonaws.com/ascend-io-public/ascend-cross-account-access-cf.yamlas the source URL. You can check the permissions of the template in the CloudFormation designer or download it to view from here.

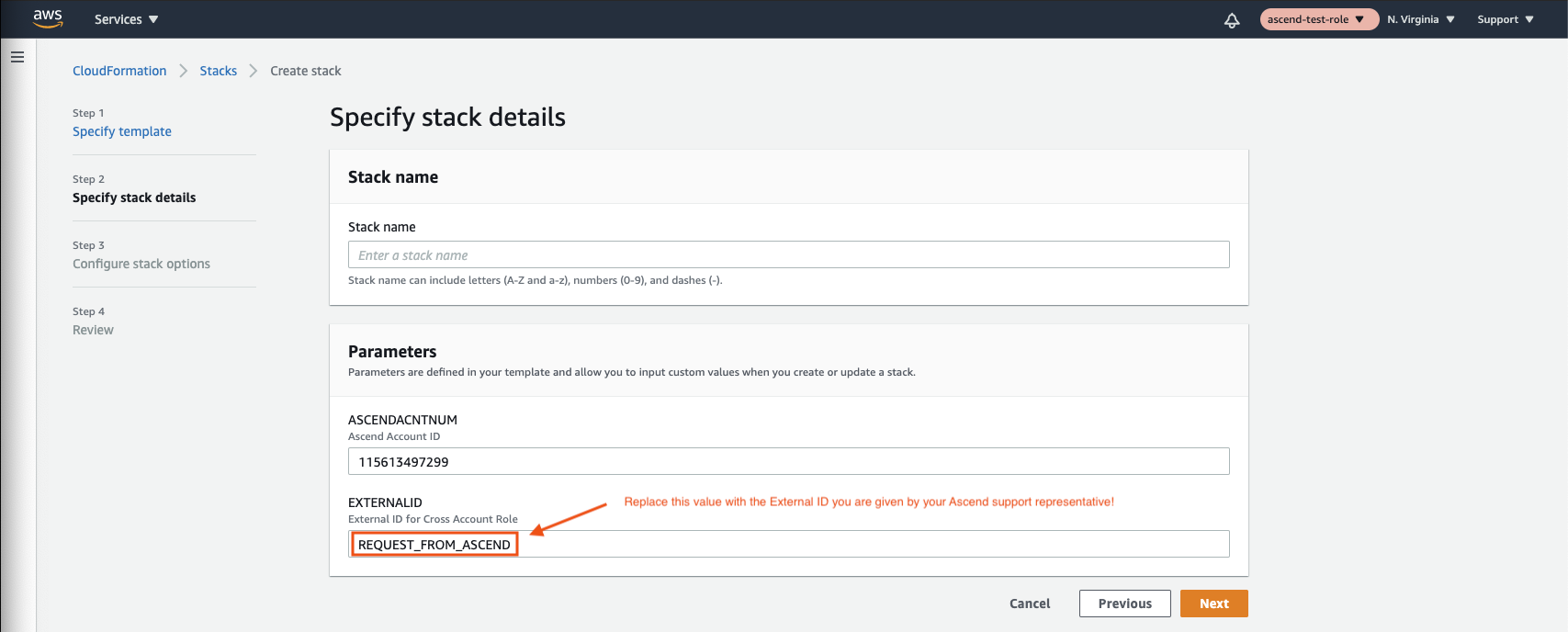

- Feel free to name the stack anything you like. You will need to get the external ID from Ascend though: to do this, please send an email to [email protected], requesting an External ID. Wait to receive your External ID from Ascend Support before continuing.

Make sure to replace REQUEST_FROM_ASCEND with your External IDYour Ascend support representative will send you an External ID that looks like a random universally unique identifier (UUID).

You must replace the placeholder value

REQUEST_FROM_ASCENDin the screenshot above with the value given to you by your Ascend support representative. Failure to do so may result in your environment deployment being delayed.

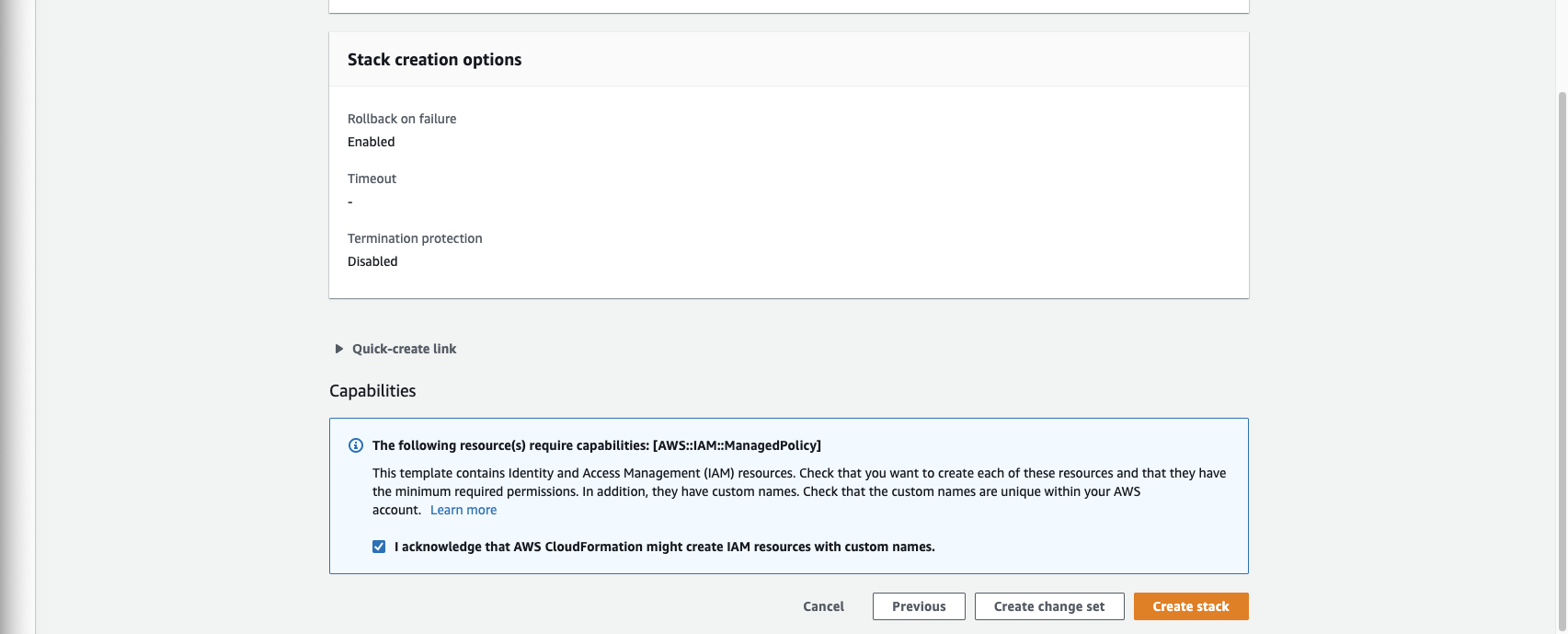

You can skip through to the end now to create stack. You will need to check the box on the review page to acknowledge that this stack is creating IAM resources

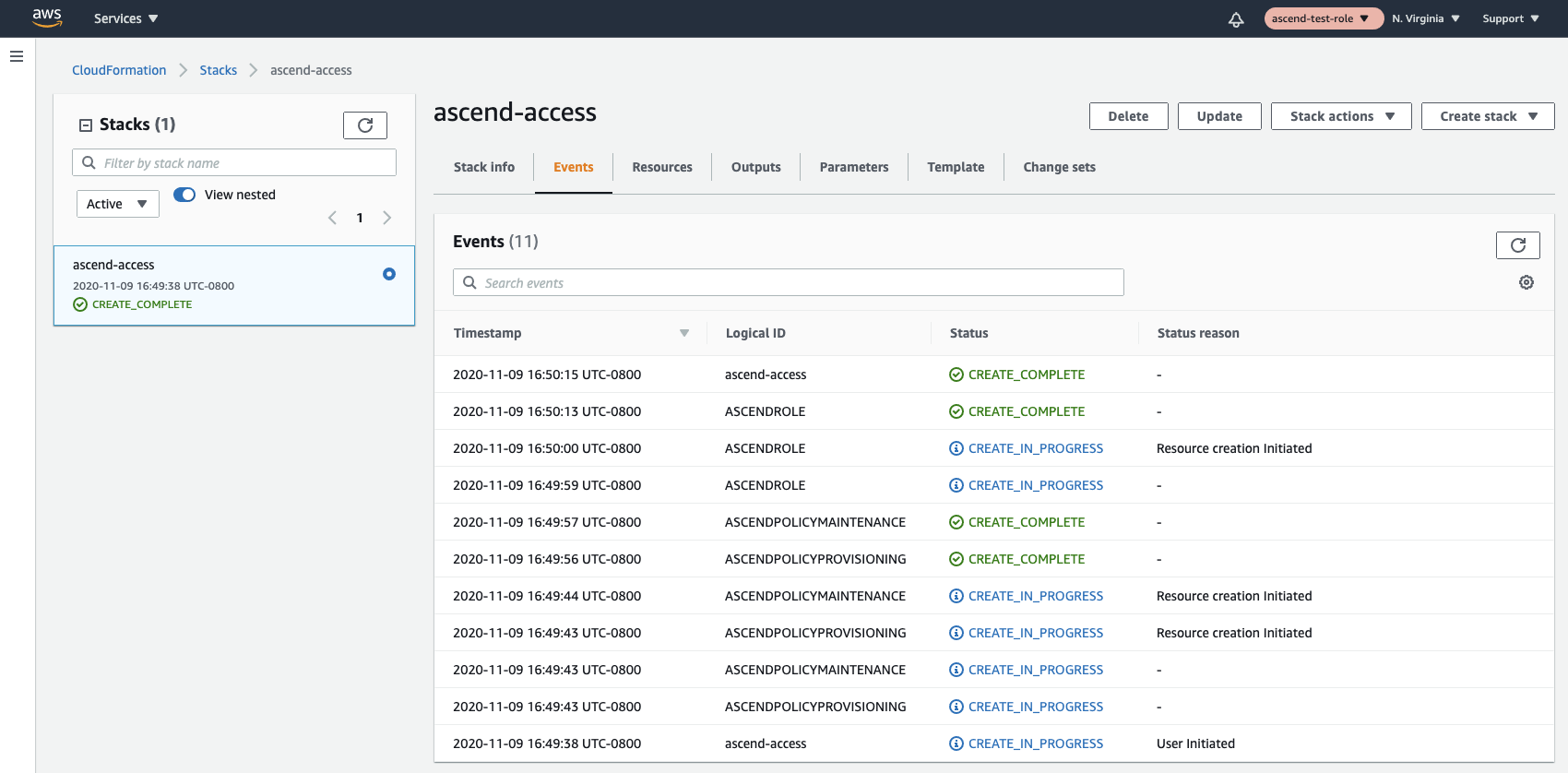

Finally, verify that all resources come up correctly. If you have any problems, feel free to email [email protected] and we'll help you get the problem sorted out.

Step 4: Change AWS Support Plan

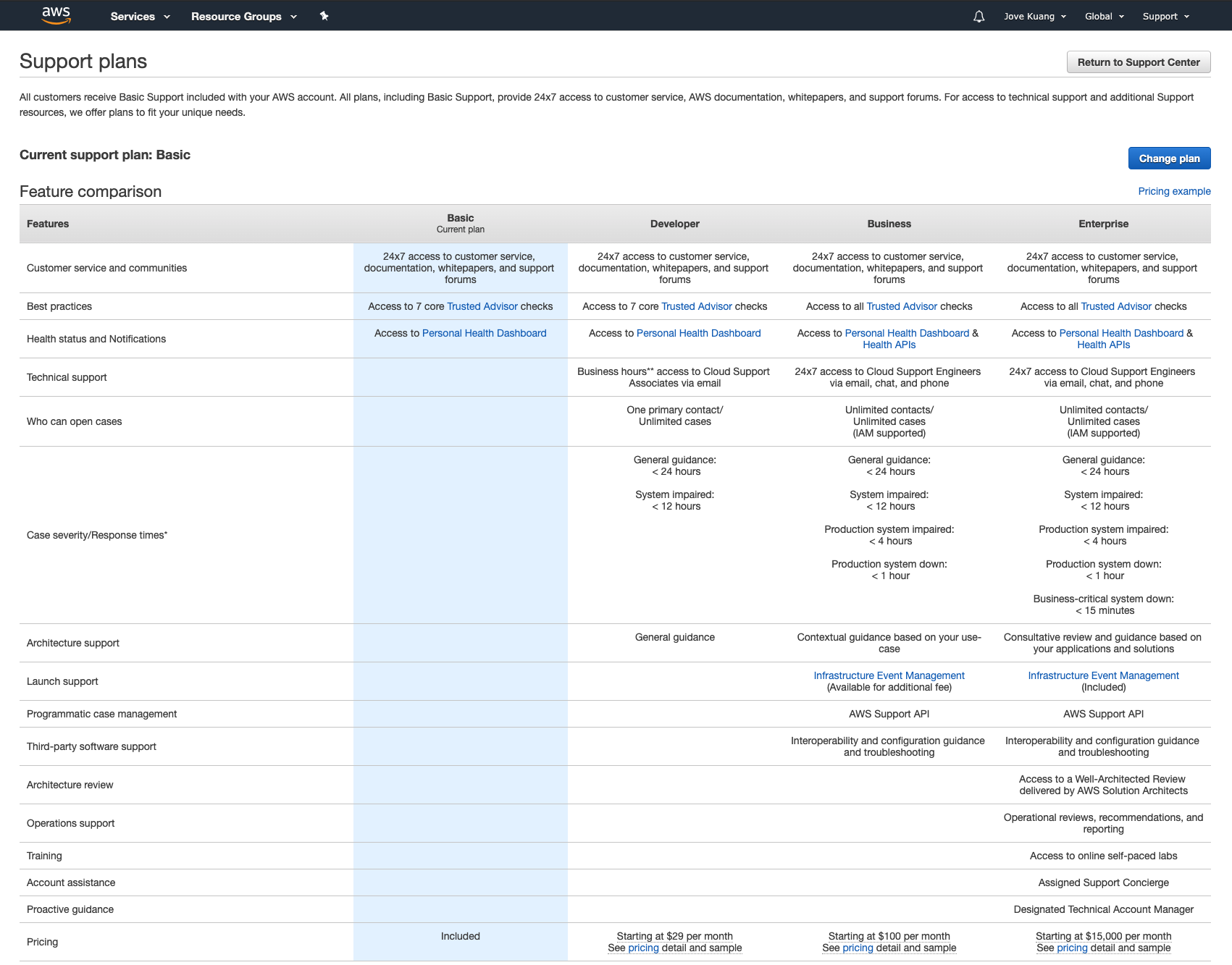

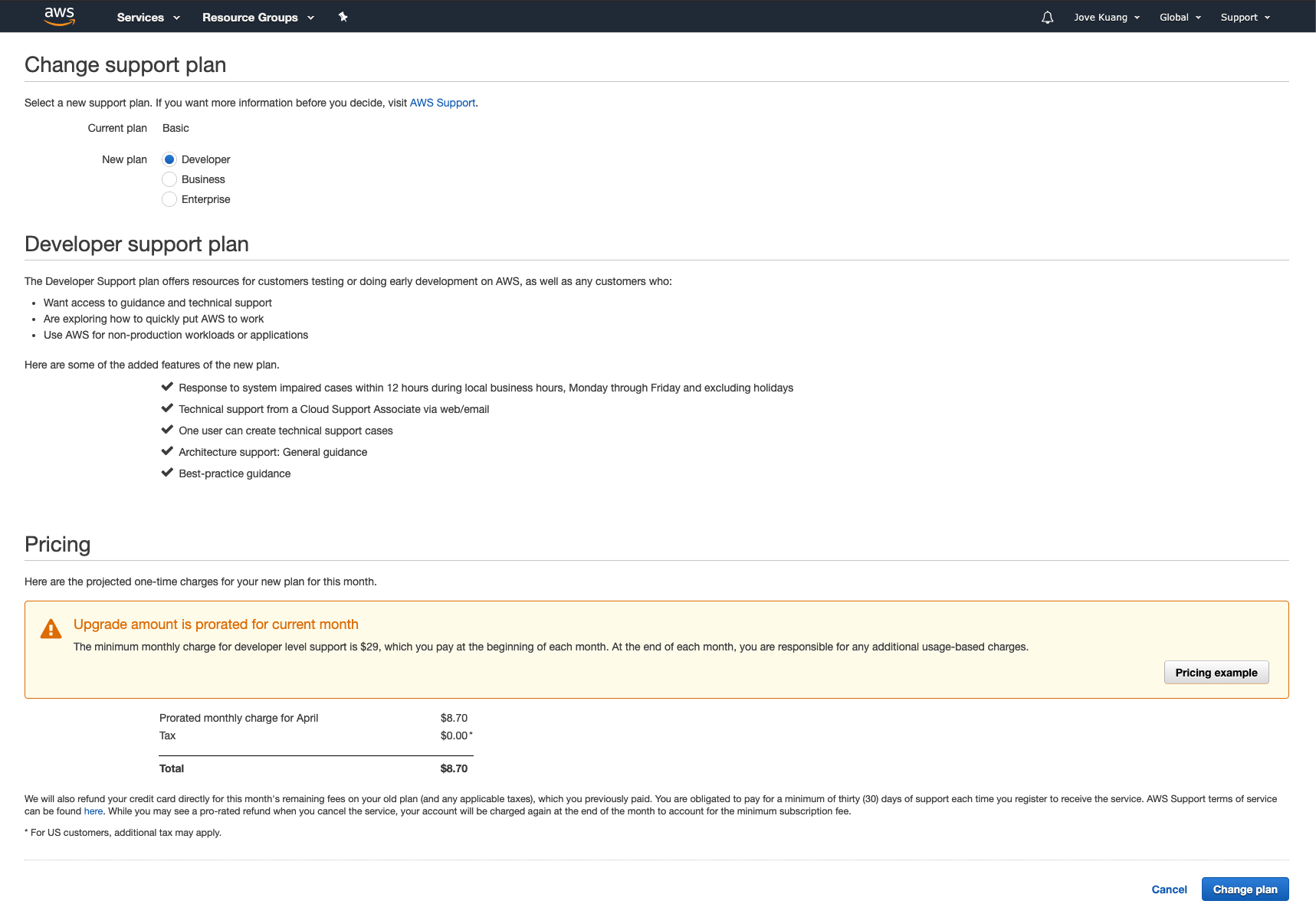

Please change AWS support plan to “Developer” or higher if the Organization is not set up with this yet.

AWS Partnership and Ascend SupportAs part of our partnership with Amazon Web Services (AWS), we ask you to upgrade your support plan. This helps us quickly bring in AWS support when needed, making issue resolution more efficient for you.

Step 5: Email Ascend Support

Once the above 3 steps are completed, please send an email to Ascend at [email protected] including the following:

- The AWS Account ID you have created.

- The desired region for the Ascend Enterprise deployment.

- The desired URL for Ascend Enterprise, e.g. your_company.ascend.io.

- The desired SSO options, such as Google, Okta, OneLogin, etc. Please follow our documentation for Okta and OneLogin to complete setup.

- The IAM Role ARN for the IAM role created in Step 3

- Inform us if you need BYON. If you do, please follow these steps

Deployment Process

Once Ascend receives the email containing the required information, Ascend will proceed with the deployment. This process usually completes within 48 hours after Ascend receives all required information.

Once the deployment has completed, customer will have access to Ascend Enterprise at the preferred URL, e.g. your_company.ascend.io.

Maintenance & AWS Account Access

Ascend will be responsible for new software releases and maintenance of all services within this AWS account.

Customer Access

Customer will retain access to this AWS account for auditing purposes. Ascend does not recommend customer to host any other services in this AWS account other than Ascend Enterprise.

Ascend Access

Ascend operational systems require access to the AWS account at all times to ensure reliability, performance, and code deployments. For long term maintenance, the customer may reduce Ascend's access by editing the CloudFormation template to remove the ASCENDPOLICYPROVISIONING policy, or swapping the template source to https://s3.amazonaws.com/ascend-io-public/ascend-cross-account-access-maintenance-cf.yaml, which you can download to view from here.

Updating CloudFormation Stack to Grant Provisioning Level Access for Ascend

- Navigate to the CloudFormation service.

- Select the stack granting Ascend access and click the “Update” button

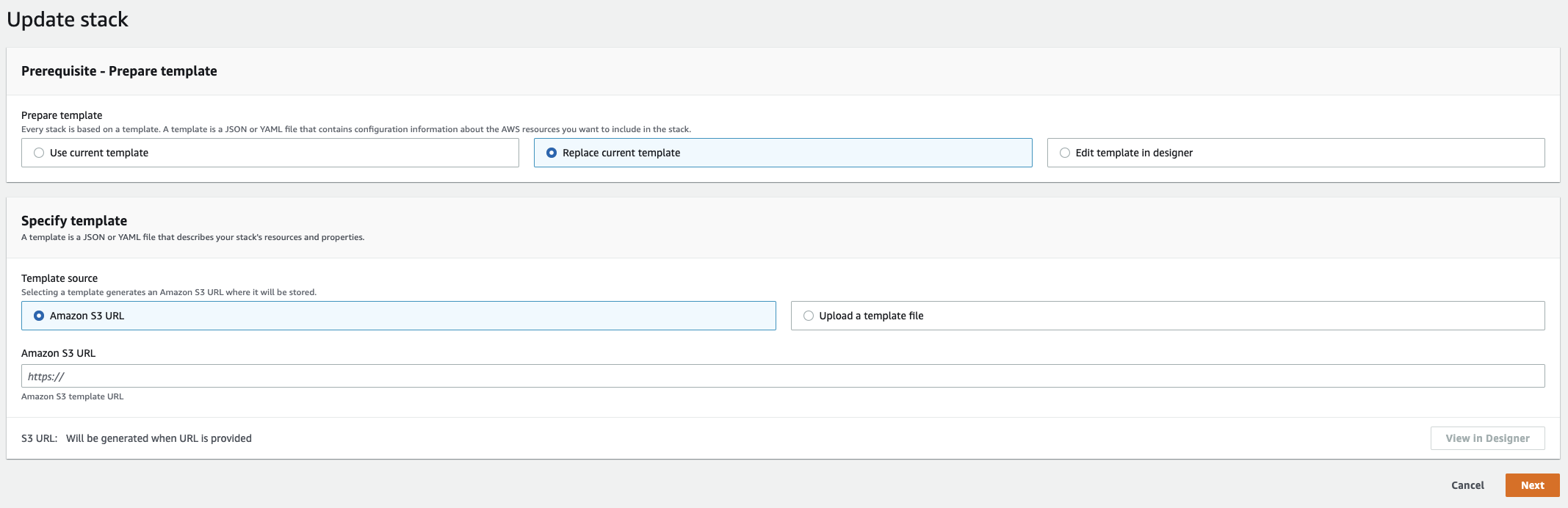

- Select Replace current template, and choose Amazon S3 URL as the source. Next, input

https://s3.amazonaws.com/ascend-io-public/ascend-cross-account-access-cf.yamlas the S3 URL.

- Continue selecting Next on the subsequent pages to finish updating the stack (no other configuration is needed). For the final review page, select the box acknowledging this stack is creating IAM resources.

- Finally, verify that all resources have updated correctly. If you have any problems, feel free to email [email protected] and we’ll help you get the problem sorted out.

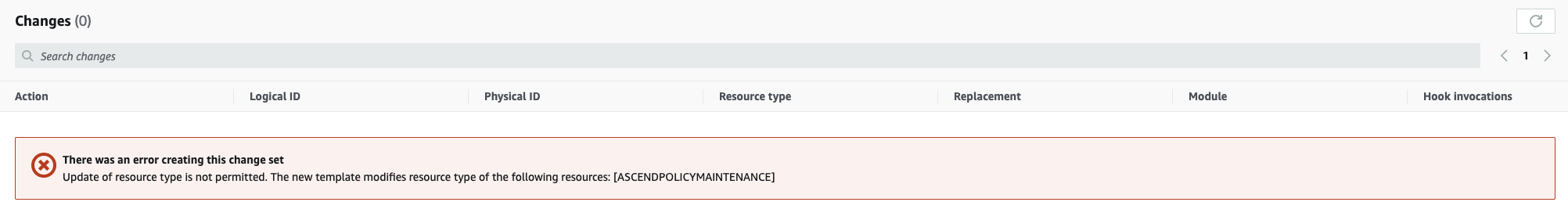

If you encounter an error like the one below, you will need to delete and recreate the CloudFormation stack.

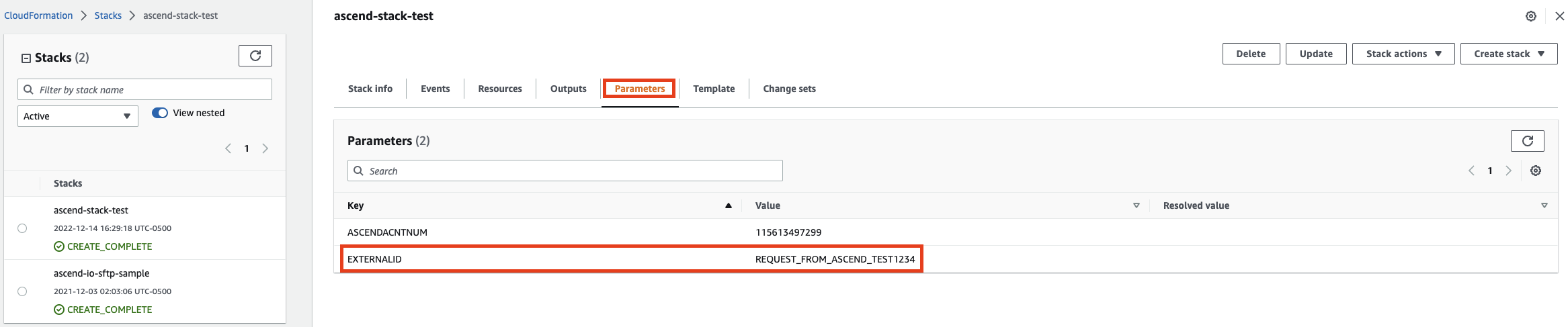

Make sure to save the EXTERNALID configured to the stackBefore deleting the stack, you must save the EXTERNALID configured to the stack. Creating a new stack uses EXTERNALID. Find EXTERNALID by navigating to the parameters tab in the stack information. After deleting the stack, continue to Step 3 to recreate the stack with provisioning level access.

Once upgrades are complete, reduce Ascend’s permissions to Maintenance level by updating CloudFormation Stack again, using https://s3.amazonaws.com/ascend-io-public/ascend-cross-account-access-maintenance-cf.yaml as the S3 URL for Step 3.

Ascend AWS Resources

IAM Resources

Ascend's platform is different from typical data platforms because it is able to both allow users to launch custom code workloads on the Ascend platform, while limiting that code to only access the data that the user who authored it is permitted to access. Part of the way we enforce that is by not granting instance-level roles for data access. In fact, we cut all pods off from being able to access the ec2 metadata service in order to eliminate that risk.

In order to facilitate operations then, we mount credentials to pods as needed, depending on the requirements of that pod. More specifically, we mount credentials to the pod that are able to do only the very minimum that that pod must do.

As part of this, we create 6 AWS IAM users and key pairs.

Basic AWS Users

The first of these users is used for the sole purpose of accessing S3 data. In Enterprise plans, it is paired with bucket-level access origin protections in order to further guarantee that all data access comes from trusted processes in the customer's Data Plane.

The next two users are used by the cluster autoscaler process in each of the two kubernetes clusters. They are granted basic autoscaling describe, and the ability to set capacity and terminate instances in the autoscaling groups they manage.

EKS AWS Users

The next 3 users are actually specific to EKS access: EKS is unlike the managed kubernetes services from other clouds, it is not possible to grant access to it without a backing AWS entity. As such, for each use case of a kubernetes service user that needs access from outside the cluster, there is an associated user. Further, all of these users actually look identical from an AWS permissions perspective because the only access they have is the basic permissions required to attempt to authenticate with EKS. The actual permissions that they have are managed within the cluster itself.

The first of these users is the Data Plane access user, used by the Control Plane cluster to launch workloads on the Data Plane cluster. It only has access to manage pods in the Data Plane.

The second of these users is the external-dns user. We have a number of services in the Ascend environment that have external endpoints. In order to automate and make our operations more resilient to human error, we have an external dns service that watches the “ExternalIP” configuration of services in the clusters marked with a tag requesting DNS. It then updates DNS in our central ops environment according to the observed IP (or hostname). As such, the only access it has is kubernetes service list and describe.

Finally, we have the teleport user. This user is used to orchestrate limited, view-only access for ascend engineers in Enterprise deployments (allowing basic ops triage without granting any access to the underlying clusters, creating a hard barrier between ascend employees and customer data).

Extra Protections (Enterprise Package)

In the Enterprise Package, Ascend has constructed security in such a way that the credentials are completely cordoned off, and the credentials themselves are not sufficient to gain access to the customer's data. The second requirement is that the data can only be accessed from within the customer's Data Plane, preventing the access credential itself from being a single point of compromise.

Ascend Footprint

Resting Resources

The following resources are present when the environment is at rest. If you are looking to optimize costs, consider purchasing reservations for these resources.

Databases w/ active failover

Core app config

2x db.r-family.large ("core")

Partition metadata

2x db.r-family.large ("fragments")

EKS Cluster

1 EKS cluster ("main")

Team Size:

- 1x 4-core, ~32GB On Demand node (AWS r-family, xlarge)

- 3x 8-core, ~64GB Spot node (AWS r-family, 2xlarge)

Standard Size:

- 1x 8-core, ~64GB On Demand node (AWS r-family, 2xlarge)

- 3x 16-core, ~128GB Spot node (AWS r-family, 4xlarge)

Networking

3x NAT Gateways

Scaling Costs

The following costs scale with usage.

Data Ingest/Backfill

The two components that have the greatest scaling cost with ingest are compute (both spot nodes in the Control and Compute cluster), and NAT Gateway transfer costs. From our observations, cost scaling appears to be close to linear with the volume of data ingested. Data backfill is typically the largest single cost that our customers see.

Steady State Processing

At a steady state, you are most likely to see low recurring costs of ingest (reflected in the spot nodes in the Control Cluster and Data processed costs in the NAT Gateways), and a larger percentage contribution from the Compute Cluster spot nodes, as they propagate new data through your Dataflows.

Notes

- If you need the On Demand node pinned to a certain type for Reservations, contact Ascend Support.

- Due to unpredictable spot availability, we are not currently able to make guarantees about the exact instance type of the spot nodes, only that they will have the same cpu/memory profile.

- In practice, we see Spot nodes cost about 30% the price of On Demand nodes, but we can't make any guarantees about those costs.

- The spot pools autoscale in response to workload demands. By default, they max out at 30 nodes. If you would like a higher scaling limit, please contact Ascend Support.

Updated 11 months ago