Preparing a Custom Image

Prerequisites:

- A container registry to store and pull your custom image from (Ascend.io currently supports Red Hat Quay, Docker Hub, Azure Container Registry)

Pull the latest Ascend production Spark image

Ascend uses Quay.io to host built Spark images. To download the latest images used in your environment:

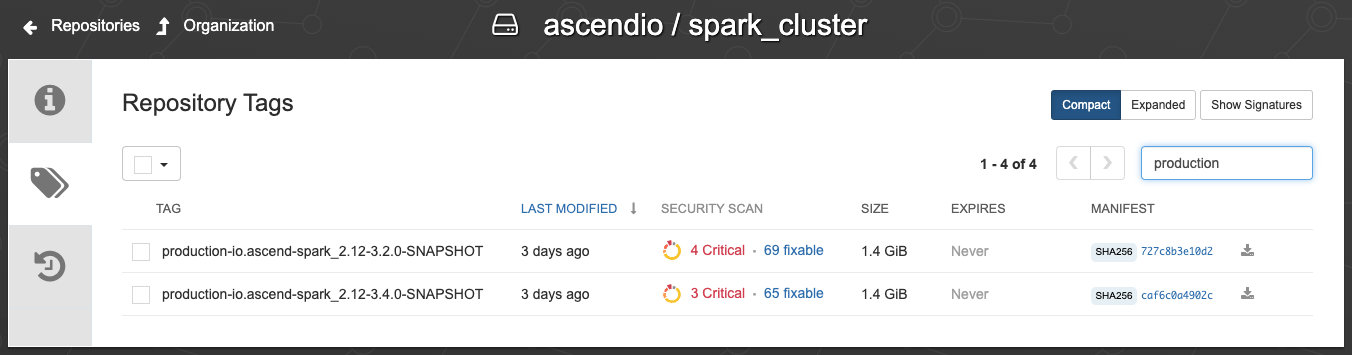

- Visit https://quay.io/repository/ascendio/spark_cluster?tab=tags

- In the "Filter Tags" search box on the right side, enter "production"

A list of production Ascend images

- Pull the image using docker. For example:

docker pull quay.io/ascendio/spark_cluster:production-io.ascend-spark_2.12-3.4.0-SNAPSHOT

Understand the Ascend image file organization (optional)

This section is optional and only needed if you want to add custom scripts to the image for use in your dataflows.

Bash into a running container

- Copy the ID of image you just pulled (can be viewed via

docker image ls - Run

docker run -it --rm <IMAGE_ID> bash - Run

cd /appin bash

The /ascend directory

/ascend directory- Run

echo $PYTHONPATH, and you can see/appis in the Python path. Under/app, the directory/ascendcontains some utility functions and connection definitions. This enables you to do things likefrom ascend.log import loggerwhich print out debugging information in PySpark Transforms. - To add your own utility functions, we suggest placing them in a directory under

/app, which forms a structure like:

app/

ascend/

my_organization/- To automate the process, use a

Dockerfile, create the needed directory and copy files in.

Build image with new Python libraries

As an example, we would like to install the popular arrow library to manipulate dates and timestamps.

- Create a new directory from anywhere in your system.

mkdir test && cd test/- Create a

Dockerfilein the directory with the following content:

FROM quay.io/ascendio/spark_cluster:production-io.ascend-spark_2.12-3.4.0-SNAPSHOT

RUN pip install arrowRemember to change the image tag to the one you previously pulled.

- Build the new image

docker build -t spark_image:prod .Push the built image to your container registry

- Tag the new image with registry's host name and port (refer to your registry's instructions)

For example, using quay.io, do:

docker image tag spark_image:prod quay.io/my_company/spark_image:prod- Push the tagged image to registry

docker push quay.io/my_company/spark_image:prod

Keeping your image up to dateAscend releases new Spark images when we add new functionality or change existing implementations. This is typically done on a weekly basis.

It is recommended to regularly rebuild and push your images against the latest Ascend images, to enjoy the latest features.

Summary

In this tutorial, we walked through the end-to-end process of acquiring and extending Ascend's native Spark images. In the next section, we will demonstrate how to run Ascend Cluster Pools using the newly generated image.

Updated 11 months ago