Read Connectors

Read Connectors build off of Connections that have either Read-Only or Read-Write Access. Read Connectors connect to and synchronize data coming into a Dataflow. Ascend supports many out-of-the-box connectors, as well as a Custom Read Connectors framework for writing your own.

Replication Stategies

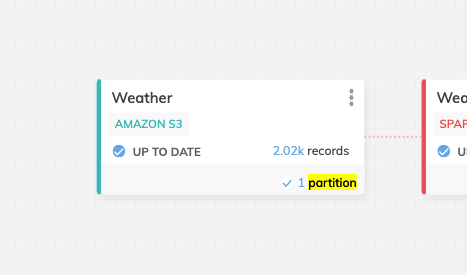

Ascend uses replication strategies within Read Connectors to read a table from a database into Ascend. Replication Strategy options will differ based on the Connection selected. Below are some of the possible options:

-Incremental: a new partition will be created each time the Read Component is ran. This partition will have the new or updated records based on the column selected.

-Full ReSync: there will only be one partition, each time the Read Component runs, the partition will be replaced with the newest copy of the table.

-Table Snapshot: there will be a new partition each time the Read Component run. The new partition will have a full copy of the table.

-Change Data Capture: there will be a new partition added each time the Read Component runs. The new partition will have the changes made to the table. This includes, additions, updates, and deletes.

Updated 11 months ago