Snowflake

Learn the required and optional properties of creating an Snowflake Connection, Credential, Read Connector, and Write Connector

Prerequisites

- Access credentials

- Snowflake account

- Warehouse and Database name

Connection Properties

The following table describes the fields available when creating a new Snowflake Connection. Create a connection using the information below and these step-by-step instructions.

| Field | Required | Description |

|---|---|---|

| Access Type | Required | This connection type is Read-Only, Write-Only, or Read-Write. |

| Connection Name | Required | Input your desired name. |

| Description | Optional | Add a description of this Connection. |

| Account | Required | Specifies your account identifier. It is common to use the "Account Locator in a Region" which is a format that is a little bit more concise of the form <account_locator>.<region_id>.<cloud>, such as companyaccount.europe-west4.gcp. DO NOT add the ".snowflakecomputing.com" suffix. |

| Warehouse | Required | Name of the warehouse to use. Each individual read and write connector will be allowed to override which warehouse to use. |

| Database Name | Required | Name of the default database to use. Each individual read and write connector will be allowed to override which database to use. |

| Optional Role Name | Optional | A role to use for the connection to Snowflake. If not specified, this will use the default role for the user. |

| Requires Credentials | Optional | Choose from existing credentials or create new credential for connecting to Snowflake if Required Credentials checkbox is selected. |

Credential Properties

The following table describes the fields available when creating a new Snowflake credential.

| Field | Required | Description |

|---|---|---|

| Credential Name | Required | The name to identify this credential with. This credential will be available as a selection for future use. |

| Credential Type | Required | This field will automatically populate with Snowflake. |

| User | Required | Snowflake username to connect with. |

| Password | Required | Snowflake password to connect with. |

Warehouse, Database, and Role Name OverridesIf you have multiple Warehouse, Database, and Role Names within Snowflake that share a single credential, you can either

- Create a new Connection and reuse the Credential, or

- Use a single Connection and override the warehouse, database, and/or role name within each Read Connector and Write Connector.

Read Connector Properties

The following table describes the fields available when creating a new Snowflake Read Connector. Create a new Read Connector using the information below and these step-by-step instructions.

| Field | Required | Description |

|---|---|---|

| Name | Required | Provide a name for your connector. We recommend using lowercase with underscores in place of spaces. |

| Description | Optional | Describes the connector. We recommend providing a description if you are ingesting information from the same source multiple times for different reasons. |

| Override Warehouse Name | Optional | Controls which warehouse Ascend will use for ingestion of the source table. This will override the Connection warehouse. |

| Override Database Name | Optional | Changes which database is used when reading that table. |

| Override Optional Role Name | Optional | Controls which database role is used when making the connection to read a specific table. |

| Table Name | Required | Name of the table to ingest. The table name is case-sensitive. Enclose it in double quotes if the table name is not all upper case. |

| Schema Name | Optional | The name of the Snowflake Schema. |

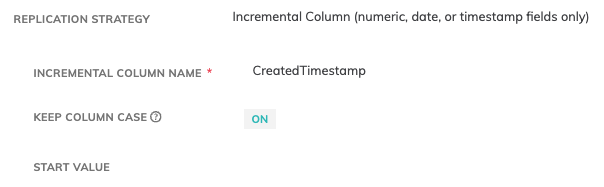

| Replication Strategy | Optional | Full Resync, Filter by column range, or Incremental column name. See Database Reading Strategies for more information. |

| Data Version | Optional | A change to Data Version results in no longer using data previously ingested by this Connector, and a complete ingest of new data. |

Case-sensitivity with Incremental IngestIf you are using incremental ingest and your incremental column is case-sensitive, make sure that you have selected Keep Column Case option and that your Incremental Column exactly matches the column in your source table.

Write Connector Properties

The following table describes the fields available when creating a new Snowflake Write Connector. Create a new Write Connector using the information below and these step-by-step instructions.

Field | Required | Description |

|---|---|---|

Name | Required | Provide a name for your connector. We recommend using lowercase with underscores in place of spaces. |

Description | Optional | Describes the connector. We recommend providing a description if you are ingesting information from the same source multiple times for different reasons. |

Upstream | Required | The name of the previous connector to pull data from. |

Override Warehouse Name | Optional | Controls which database role is used when making the connection to write a specific table. |

Override Database Name | Optional | Changes which database is used when writing that table. |

Override Optional Role Name | Optional | Controls which database role is used when making the connection to read a specific table. |

Table Name | Required | Name of the table to write to. The table name is case-sensitive. Enclose it in double quotes if the table name is not all upper case. |

Schema Name | Optional | The name of the Snowflake Schema. |

On Schema Mismatch | Optional | Select how you want Ascend to handle writing data if the schema indicated above does not match the schema of the table in Snowflake. Options are as follows:

|

Schema Overrides

The following fields are used when overriding the schema of an existing table within Snowflake.

| Field | Required | Description |

|---|---|---|

| Clustering Keys | Optional | Do not modify the column names to upper-case |

| Keep Column Case | Optional | Do not modify the column names to upper-case. Column case will change to uppercase by default. |

| Max Number of Parallel Ascend Partitions | Optional | The max number of partitions Ascend will write in parallel. |

| Load Timestamp Column Name | Optional | Add a column with the load timestamp when this field is provided. |

| Disable Transactional Write | Optional | Transactional Write is enabled by default. |

| A SQL Statement for Ascend to Execute Before Writing | Optional | Execute a pre-processing script before writing to final table. |

| A SQL Statement for Ascend to Execute After Writing | Optional | Execute a pre-processing script after writing to final table. |

| Data Version | Optional | A change to Data Version results in no longer using data previously ingested by this Connector, and a complete ingest of new data. |

Updated 11 months ago